The future of AI is personal. The more accustomed to AI tools users are, the more they want their experience of working with them to be personalized and agentic. Whether it is an AI assistant recalling your past conversations, a legal tool reviewing a specific company's contracts, or a personal knowledge base searching through your private documents, these applications all rely on one core capability: providing "memory" specific to a single user or business.

In software architecture, this is known as multitenancy—a single instance of software serving multiple isolated customers or tenants. However, building a vector search for multitenant applications has historically introduced complex performance and scaling challenges. Today, we are thrilled to announce Flat Indexes for Vector Search, a new capability in MongoDB Atlas that provides enhanced support for multitenant workloads, delivering improved performance, recall, and resource efficiency.

The multitenant vector search challenge

To understand why we built Flat Indexes, it helps to understand how vector databases traditionally work. By default, MongoDB Vector Search on Atlas uses HNSW (Hierarchical Navigable Small World) graphs. HNSW is an incredible algorithm designed for approximate nearest neighbor (ANN) search across massive, shared datasets containing millions of vectors.

However, multitenant applications (like B2C and B2B SaaS) operate differently. In a multitenant app, you might have hundreds of thousands of users, but each user only has a small handful of personal vectors (often fewer than 10,000). When a user asks their AI a question, the database filters the query down to just that specific user's data.

HNSW indexes weren’t designed for highly selective, per-tenant queries, and using them for these highly-filtered vector search queries faces certain challenges:

Poor filtered accuracy: HNSW builds full graphs without considering filters, which leads to degraded accuracy when filtering to small tenant partitions.

Unnecessary graph overhead: When highly selective filters reduce the search to a small tenant partition (e.g., a single user's few thousand vectors), the search reduces to an exhaustive scan anyway, making HNSW graph overhead pure cost.

The "noisy neighbor" problem: Because HNSW graphs are built across all tenants, updating data for one tenant can cause expensive graph traversals that impact the read and write performance of entirely different tenants.

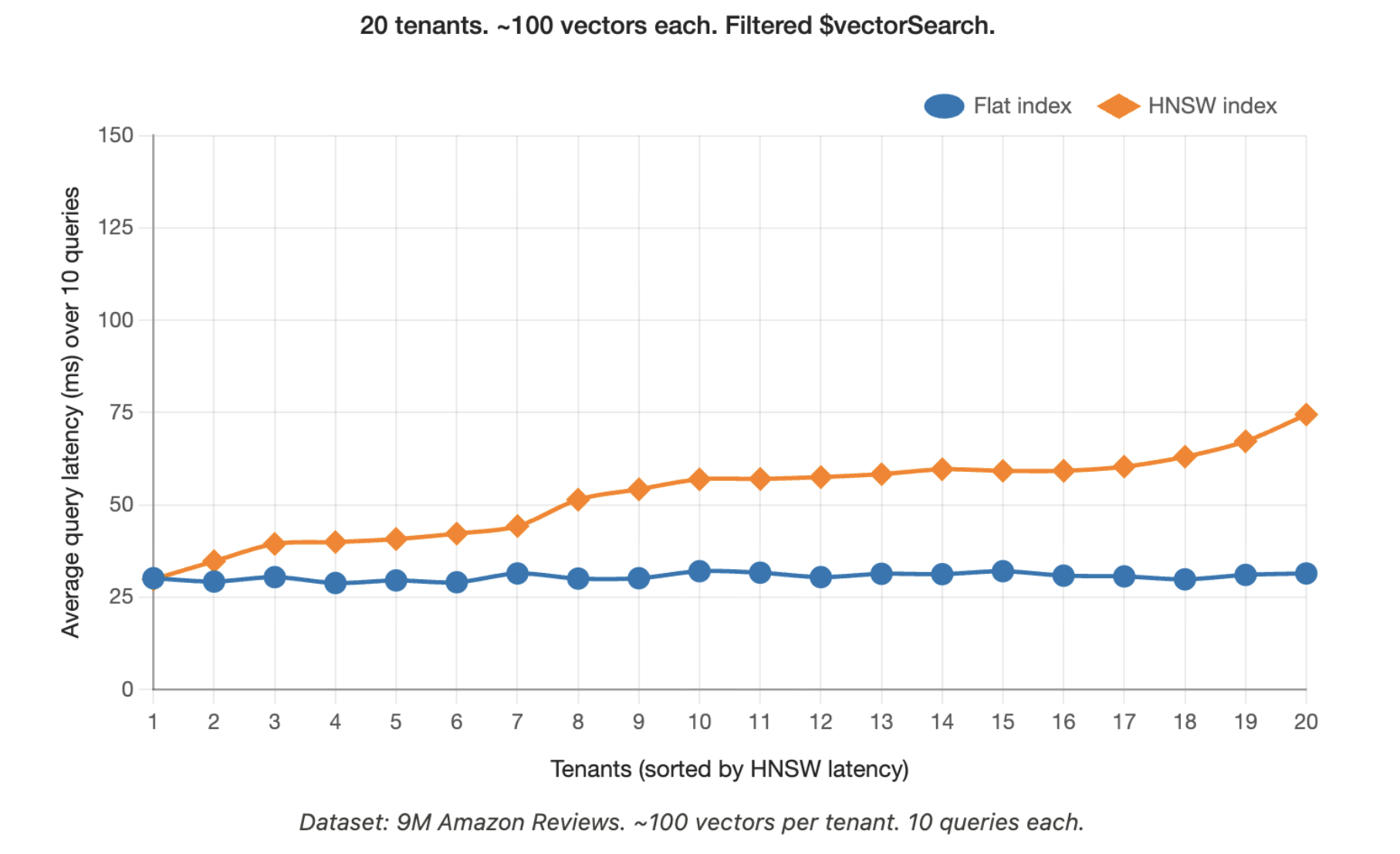

Variable performance: HNSW query times can vary up to 3x across tenants. If one large tenant occupies 10% of the index and a small tenant occupies 1%, their query performance can vary wildly.

The solution: Flat Indexes

To better enable AI apps serving millions of users, we are introducing a new indexingMethod parameter that allows developers to choose a Flat Index instead of the default HNSW graph.

A Flat Index is a simpler index type that performs an exhaustive search. Instead of navigating a complex graph, a flat index quickly scans the exact, filtered subset of data belonging to the specific tenant.

Key benefits:

Optimized for selective filters: For highly selective queries (where each tenant has thousands of vectors), an exhaustive scan is already the fastest path. Flat indexes embrace this directly, improving both latency and recall.

Predictable performance for bounded partitions: The query latency in a Flat vector search index stays within a tight band regardless of which tenant is being targeted. No noisy-neighbor effects across tenants.

Resource efficiency: Flat indexes eliminate the memory bloat and maintenance overhead associated with building HNSW graphs, leading to lower memory usage and compute requirements at write time.

Simple migration path: Simply switch indexingMethod from 'hnsw' to 'flat' - no query or data changes required.

Figure 1: Query latency for Flat Indexes vs HNSW

When to use Flat vs. HNSW indexes

We recommend using Flat Indexes if your application fits the following profile:

You have many tenants (up to 1 million tenant IDs).

Each tenant has relatively few vectors (fewer than ~10,000 vectors per tenant).

Your vector search queries are always filtered down to a specific tenant.

Conversely, you should stick to the default HNSW Indexes if:

You are searching across large volumes of shared data that multiple users query together (like a global product catalog).

Your individual tenants are very large (more than 10,000 vectors). Note: For these larger tenants, we recommend creating dedicated HNSW indexes on separate views to isolate them from smaller tenants.

How it works

Implementing a flat index is incredibly simple. When defining your vector search index, you just add "indexingMethod": "flat" to your vector field definition:

There are no changes required for your query syntax; you will continue to use the standard $vectorSearch operator. Furthermore, Flat Indexes are fully compatible with MongoDB's latest features, including Automated Embedding and both scalar and binary quantization (which automatically rescans and rescores quantized values for maximum speed and accuracy).

Looking ahead: The future of multitenancy

Flat Indexes represent the first phase of a larger, long-term commitment to making MongoDB the ultimate platform for multitenant AI applications, with additional features and functionality coming later this year.

Flat Indexes are available today in Public Preview for all Atlas users across AWS, Google Cloud, and Microsoft Azure, as well as MongoDB Search and Vector Search in Community Edition.

Next Steps

Ready to get started? Check out our updated Vector Search documentation to learn how you can optimize your AI applications today.