The AI industry's obsession with full autonomy is the single biggest obstacle to getting agents into production.

Despite billions in investment and countless impressive prototypes, most organizations still can't deploy AI agents that work reliably beyond controlled environments. Token-driven loops drift unpredictably. Prompts degrade with each model update. Context windows become polluted with irrelevant information. State management fails across sessions.

What works brilliantly in a demo breaks down when faced with the messy realities of enterprise environments.

And yet, single-agent reliability is actually the easy version of this challenge. As the industry races toward multi-agent architectures—teams of specialized sub-agents coordinated by supervisor agents—the autonomy problem doesn't just persist, it compounds.

The organizations that are actually getting agents into production have stopped chasing full autonomy entirely. They've found a more effective path: bounded autonomy—where agents have enough autonomy to deliver real value, but are constrained enough to be reliable and safe. The future of autonomous agents doesn't require choosing between full autonomy and rigid control. It requires building progressively toward autonomy on a foundation of reliable infrastructure.

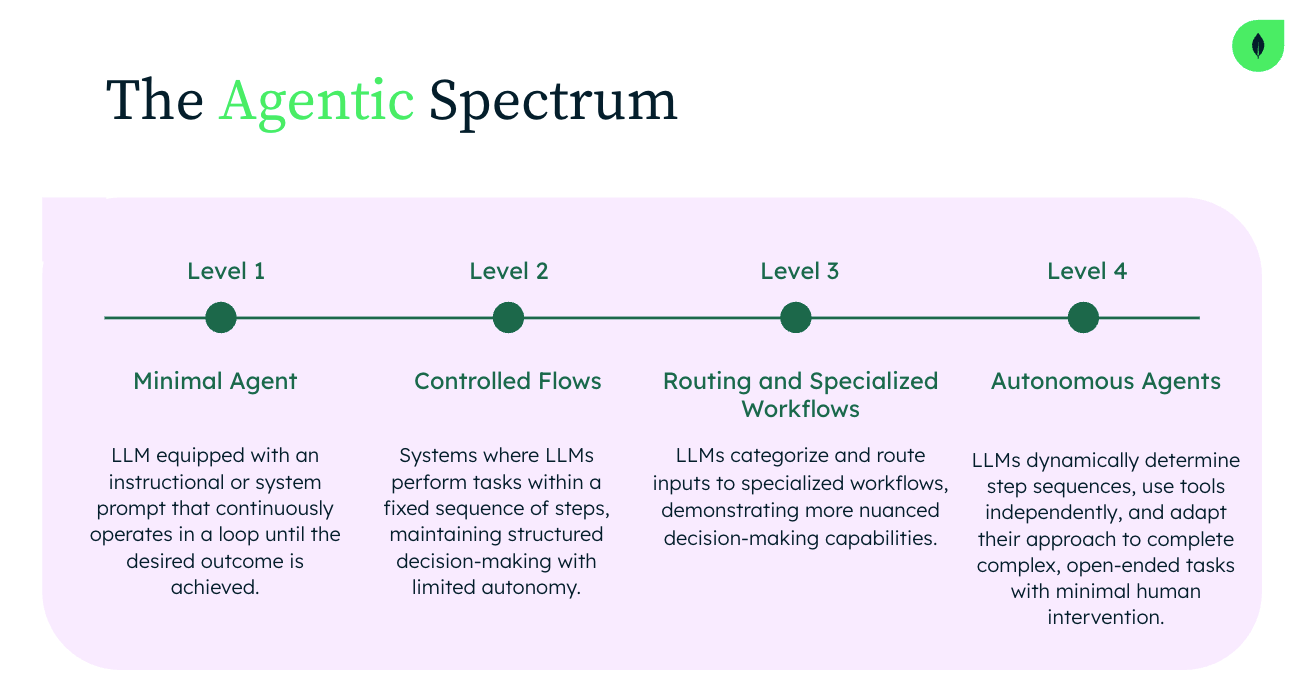

The autonomy spectrum: why the middle wins

This reliability crisis has created a false choice in AI development: full autonomy or rigid deterministic control. Many practitioners have pulled back from autonomy entirely, choosing predictable workflows over adaptive intelligence. Others keep pushing toward full autonomy, failing repeatedly, and wondering why their agents can't survive contact with production.

The successful path goes through the middle. Bounded autonomous agents operate within carefully defined parameters—intelligent enough to handle complexity and variation, yet constrained enough to be trustworthy and safe.

Rather than waiting to achieve full autonomy, the leading organizations are building production experience with bounded agents, earning trust through demonstrated reliability and gradually expanding capabilities. Bounded autonomy isn't a compromise—it's the only strategy that delivers value today while building toward fuller autonomy tomorrow.

This matters because the four fundamental challenges facing autonomous agents—state management fragility, context pollution and drift, lack of deterministic control, and memory neglect—don't disappear with better models. They require better infrastructure. And they get dramatically harder when you move from a single agent to a team.

From single agents to agent teams: why bounded autonomy becomes mandatory

Coding agents are the canary in this coal mine. Tools like Devin, Copilot Workspace, and Claude Code already operate as multi-agent systems with supervisor patterns—orchestrating specialized sub-agents for planning, implementation, testing, and review. They work because software development is a domain where the worst case is bad code that gets caught in review.

Now consider what happens when multi-agent patterns reach construction, manufacturing, supply chain, and financial services—domains where a bad autonomous decision has physical, safety, or regulatory consequences. These aren't hypothetical scenarios. Multi-agent architectures are already moving from the frontier of coding tools toward more traditional industries, where risks are higher and governance and compliance requirements are non-negotiable.

A supervisor agent coordinating sub-agents in these environments needs to understand the autonomy boundaries of each sub-agent it orchestrates. It needs to track which decisions were made autonomously versus with human approval. It needs to maintain causal audit trails across the entire agent team—not just individual decision logs, but a causal graph of which agent's output became which other agent's input, and what autonomy level governed each decision. And it needs to know precisely when to escalate.

This is where bounded autonomy stops being a philosophical preference and becomes mandatory infrastructure. For a single agent, unbounded autonomy is a risk. For a team of agents, each making decisions that compound on each other's outputs, it's a combinatorial explosion of risk. Bounded autonomy provides the composable, auditable decision boundaries that make multi-agent coordination governable.

And the supervisor agent itself needs its own bounded autonomy—starting with narrow orchestration authority and earning expanded coordination powers as the system proves reliable. Progressive autonomy becomes recursive: who watches the watcher, and within what limits? In regulated industries, you can't just say "the AI decided." You need to show which AI decided what, within what authority, and who approved the expansion of that authority. Bounded autonomy gives you that structure. Unbounded autonomy gives you an unexplainable black box.

Progressive autonomy in practice

This isn't just theory. A global building materials company—who preferred to remain anonymous because they view their agent strategy as a competitive differentiator—has deployed AI agents intentionally designed to be "just autonomous enough" for today while building the foundation for tomorrow's fuller autonomy. Their team made a strategic choice: start with bounded autonomy that delivers immediate value while creating the infrastructure and experience needed for future expansion. They built their agents using MongoDB for persistent state management and LlamaIndex for intelligent document processing and retrieval.

Their journey illustrates how bounded autonomy expands over time across three patterns:

Crisis response with guardrails

When supply chain disruptions occur, their bounded autonomous agents analyze historical disruption patterns, identify similar past scenarios, and propose solutions—but still require human approval for major decisions like supplier changes or significant budget reallocations. What starts as "analyze and recommend" evolves to "analyze and execute within limits" and eventually to "handle entire categories of disruptions independently."

Intelligence that learns and grows

Beyond crisis response, bounded autonomous agents also serve as intelligent knowledge systems, autonomously organizing and retrieving relevant information from vast data stores. Initially, they only surface insights while leaving decisions to humans. As trust builds and patterns become clear, they gain authorization to make increasingly complex decisions. The infrastructure retains the expanding history of successful autonomous decisions, building an evidence base for when and how to safely extend capabilities.

Graduated operational authority

When equipment failures occur on a manufacturing production line, bounded autonomous agents start by only diagnosing issues and searching maintenance documentation. As they prove reliable, they gain permission to propose maintenance schedules. Eventually, they autonomously order parts within pre-approved spending limits. Every autonomous action occurs within carefully designed constraints, with full observability—but those constraints evolve based on demonstrated reliability and business value.

The compound effect is powerful: each successful bounded deployment provides data and confidence for the next expansion. Their results speak for themselves—development cycles dropped from three weeks to less than one day, quality improved dramatically as noted by users, agents deliver consistent and reliable value in production, and business stakeholders trust the agents because they understand their boundaries. Most importantly, the foundation is set for progressively expanding those boundaries.

"Start with the business problem, not the tech," said the company's Principal Data Scientist. "Choose a framework with an active community that drops cleanly into your stack. That shortcut matters."

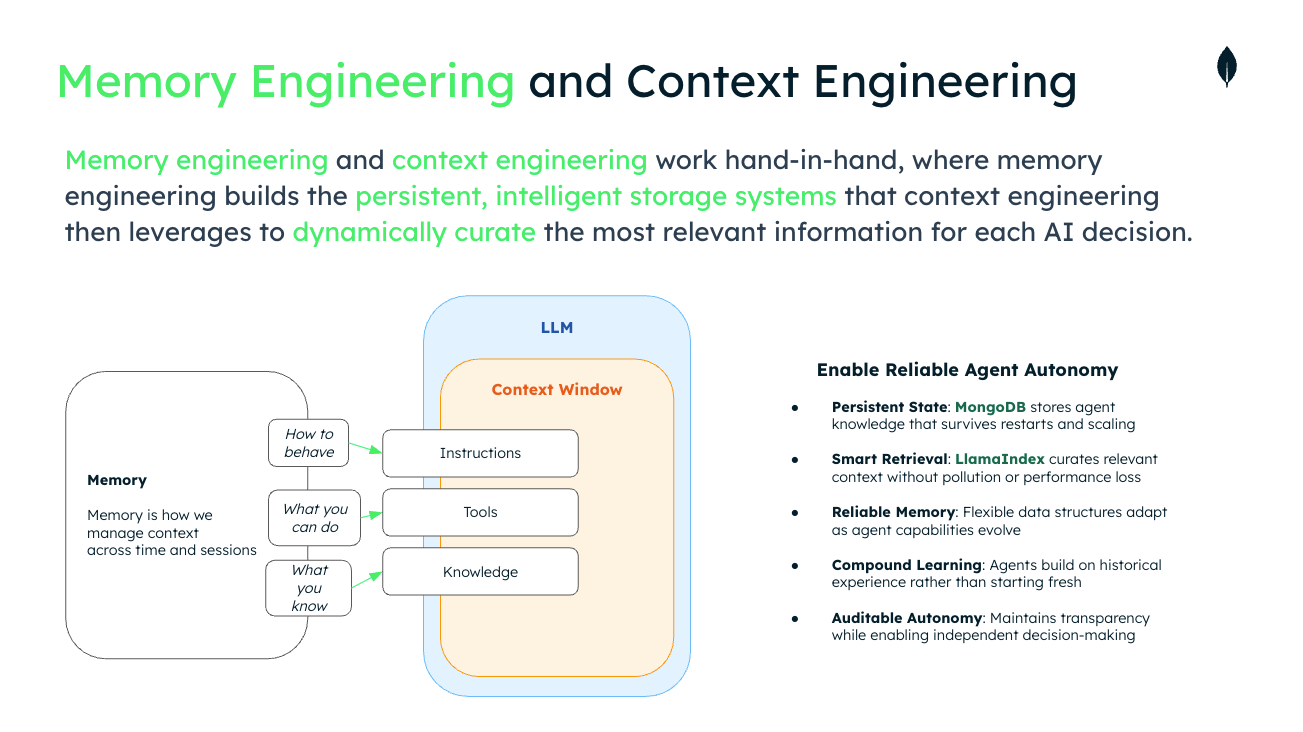

The infrastructure requirements: MongoDB + LlamaIndex

If you accept that bounded autonomy with progressive expansion is the right architecture—especially as multi-agent systems become the norm—your infrastructure stack needs to support very specific capabilities.

Persistent state that survives everything

MongoDB's document-oriented architecture naturally accommodates the complex, evolving state that agents generate throughout their autonomy journey: decision histories, expanding permission sets, learning patterns, and success metrics. Critically, Atlas can be used to preserve the complete history of an agent's evolution, creating the audit trail that justifies further autonomy expansion. For multi-agent systems, this means tracking causal relationships across agent boundaries—which agent decided what, within what authority, and who approved the expansion of that authority.

Intelligent retrieval that scales with autonomy

LlamaIndex's retrieval systems enable agents to autonomously access and process information while respecting their current boundaries. As agents prove reliable, the same infrastructure supports expanded access without architectural changes—critical as autonomous boundaries expand and agents handle more complex, varied tasks.

Workflow orchestration for progressive autonomy

LlamaIndex's Workflows abstraction is particularly crucial. Event-driven, step-based execution clearly delineates autonomous and controlled components while making it simple to adjust boundaries over time. A workflow might evolve through stages: from "autonomously analyze, require approval, human executes" to "auto-approve within limits, autonomously execute simple actions" to "handle entire categories with human notification only for exceptions." The same infrastructure that enables bounded autonomy today supports fuller autonomy tomorrow.

Production-ready for today and tomorrow

Both MongoDB and LlamaIndex are designed for enterprise-scale deployment with the reliability guarantees that production agents require, whether operating with today's bounded autonomy or tomorrow's expanded capabilities. The combined platform handles the complex journey from cautious beginnings to confident autonomous operation.

Building reliable autonomy today

The journey from bounded to full autonomy isn't just prudent—it's the only path that works in production. And as multi-agent architectures move from cutting-edge coding tools into regulated industries where human safety and compliance matter, bounded autonomy becomes the governance primitive that makes the entire system auditable and trustworthy.

Key principles for progressive autonomy:

Start bounded, think big: Begin with narrow autonomy that delivers immediate value while designing for future expansion.

Prioritize transparency: Use infrastructure that provides complete observability into agent decisions—especially across agent teams.

Expand through evidence: Let successful bounded operations justify the move toward broader autonomous capabilities.

Design for evolution: Choose platforms that support the entire autonomy spectrum.

Iterate on metrics: Track reliable data that demonstrates readiness for the next level of autonomy.

Compounding success: Ensure each bounded win sets the technical foundation for the next expansion.

The partnership between MongoDB and LlamaIndex represents the kind of infrastructure convergence required for this next phase of autonomous agent development. By solving the reliability challenges that currently prevent autonomous agents from reaching production, this collaboration enables organizations to move beyond impressive demos toward autonomous systems that deliver sustained business value.

The future of autonomous agents doesn't require you to choose between intelligence and reliability. It lets you build intelligence on foundations reliable enough to support truly autonomous operation—whether that's a single bounded agent or an entire coordinated team. That future starts now.

Next Steps

Ready to build reliable autonomous agents? Get started with LlamaIndex and MongoDB today. Explore our documentation and join thousands of developers already building production-ready AI systems.